Applies to: Microsoft Hyper-V Server 2016, Windows Server 2016, Windows Server 2019, Microsoft Hyper-V Server 2019

Starting with Windows Server 2016, you can use Discrete Device Assignment, or DDA, to pass an entire PCIe Device into a VM. This will allow high performance access to devices like NVMe storage or Graphics Cards from within a VM while being able to leverage the devices native drivers. Please visit the Plan for Deploying Devices using Discrete Device Assignment for more details on which devices work, what are the possible security implications, etc.

Applies to: Microsoft Hyper-V Server 2016, Windows Server 2016, Windows Server 2019, Microsoft Hyper-V Server 2019. Starting with Windows Server 2016, you can use Discrete Device Assignment, or DDA, to pass an entire PCIe Device into a VM. This will allow high performance access to devices like NVMe storage or Graphics Cards from within a VM.

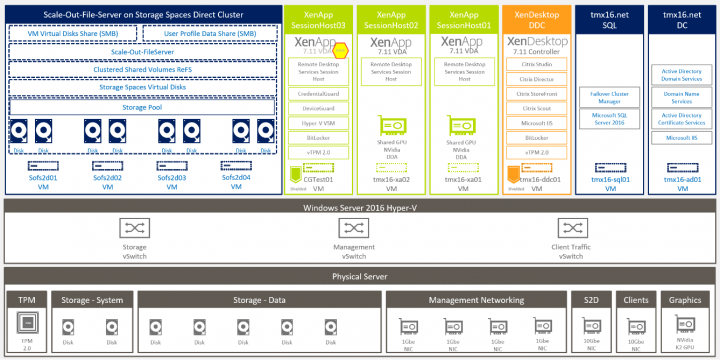

Requires applications that can leverage DAX (Hyper-V, SQL Server). Using persistent memory in a Hyper-V virtual machine. In a previous article “Configure NVDIMM-N on a DELL PowerEdge R740 with Windows Server 2019”, I showed you how to set up persistent memory for use on Windows Server 2019. I am in the process of planning my companies migration from VMware to Hyper-V, and therefore my question - i currently run my ESXi-servers from a 32GB SD card, will i be able to do this with Hyper-V Server? Does it support installation to SD card? How much space is required to run it? I cant seem to find any definite answers for the question. Hyper-V does not support a loopback storage configuration.This is a situation in which a Hyper-V system attempts to provide its own “remote” storage. As an example, you cannot have Hyper-V Server connect to a virtual machine running on a share that the same Hyper-V system is hosting. At one point, the preferred method for tying a GPU to a Hyper-V virtual desktop involved RemoteFX. Starting with Microsoft Windows Server 2016, however, this is no longer an option. If you have Hyper-V virtual desktops that are currently configured to use RemoteFX, you can continue to do so.

There are three steps to using a device with Discrete Device Assignment:

- Configure the VM for Discrete Device Assignment

- Dismount the Device from the Host Partition

- Assigning the Device to the Guest VM

All command can be executed on the Host on a Windows PowerShell console as an Administrator.

Configure the VM for DDA

Discrete Device Assignment imposes some restrictions to the VMs and the following step needs to be taken.

- Configure the “Automatic Stop Action” of a VM to TurnOff by executing

Some Additional VM preparation is required for Graphics Devices

Some hardware performs better if the VM in configured in a certain way. For details on whether or not you need the following configurations for your hardware, please reach out to the hardware vendor. Additional details can be found on Plan for Deploying Devices using Discrete Device Assignment and on this blog post.

- Enable Write-Combining on the CPU

- Configure the 32 bit MMIO space

- Configure greater than 32 bit MMIO space

Tip

The MMIO space values above are reasonable values to set for experimenting with a single GPU. If after starting the VM, the device is reporting an error relating to not enough resources, you'll likely need to modify these values. Consult Plan for Deploying Devices using Discrete Device Assignment to learn how to precisely calculate MMIO requirements.

Dismount the Device from the Host Partition

Optional - Install the Partitioning Driver

Discrete Device Assignment provide hardware venders the ability to provide a security mitigation driver with their devices. Note that this driver is not the same as the device driver that will be installed in the guest VM. It's up to the hardware vendor's discretion to provide this driver, however, if they do provide it, please install it prior to dismounting the device from the host partition. Please reach out to the hardware vendor for more information on if they have a mitigation driver

If no Partitioning driver is provided, during dismount you must use the -force option to bypass the security warning. Please read more about the security implications of doing this on Plan for Deploying Devices using Discrete Device Assignment.

Locating the Device's Location Path

The PCI Location path is required to dismount and mount the device from the Host. An example location path looks like the following: 'PCIROOT(20)#PCI(0300)#PCI(0000)#PCI(0800)#PCI(0000)'. More details on located the Location Path can be found here: Plan for Deploying Devices using Discrete Device Assignment.

Disable the Device

Using Device Manager or PowerShell, ensure the device is “disabled.”

Dismount the Device

Depending on if the vendor provided a mitigation driver, you'll either need to use the “-force” option or not.

- If a Mitigation Driver was installed

- If a Mitigation Driver was not installed

Assigning the Device to the Guest VM

The final step is to tell Hyper-V that a VM should have access to the device. In addition to the location path found above, you'll need to know the name of the vm.

What's Next

After a device is successfully mounted in a VM, you're now able to start that VM and interact with the device as you normally would if you were running on a bare metal system. This means that you're now able to install the Hardware Vendor's drivers in the VM and applications will be able to see that hardware present. You can verify this by opening device manager in the Guest VM and seeing that the hardware now shows up.

Removing a Device and Returning it to the Host

If you want to return he device back to its original state, you will need to stop the VM and issue the following:

You can then re-enable the device in device manager and the host operating system will be able to interact with the device again.

Example

Mounting a GPU to a VM

In this example we use PowerShell to configure a VM named “ddatest1” to take the first GPU available by the manufacturer NVIDIA and assign it into the VM.

Troubleshooting

Hyper V Server 2019 Docs

If you've passed a GPU into a VM but Remote Desktop or an application isn't recognizing the GPU, check for the following common issues:

- Make sure you've installed the most recent version of the GPU vendor's supported driver and that the driver isn't reporting errors by checking the device state in Device Manager.

- Make sure your device has enough MMIO space allocated within the VM. To learn more, see MMIO Space.

- Make sure you're using a GPU that the vendor supports being used in this configuration. For example, some vendors prevent their consumer cards from working when passed through to a VM.

- Make sure the application being run supports running inside a VM, and that both the GPU and its associated drivers are supported by the application. Some applications have allow-lists of GPUs and environments.

- If you're using the Remote Desktop Session Host role or Windows Multipoint Services on the guest, you will need to make sure that a specific Group Policy entry is set to allow use of the default GPU. Using a Group Policy Object applied to the guest (or the Local Group Policy Editor on the guest), navigate to the following Group Policy item: Computer Configuration > Administrator Templates > Windows Components > Remote Desktop Services > Remote Desktop Session Host > Remote Session Environment > Use the hardware default graphics adapter for all Remote Desktop Services sessions. Set this value to Enabled, then reboot the VM once the policy has been applied.

Hyper-v 2019 Server

The Hyper-V Amigos ride again! In this episode (19) we discuss some testing we are doing to create high performant backup targets with Storage Spaces in Windows Server 2019. We’re experimenting with stand-alone Mirror Accelerated Parity with SSDs in the performance tier and HDDs in the capacity tier on a backup target. We compare backs via the Veeam data mover to this repository directly as well as via an SMB 3 file share. We look at throughput, latency and CPU consumption.

Windows 2019 Hyper-v Server

One of the questions we have is whether an offload card like SolarFlare would benefit backups as these offload not just RDMA capable workloads. The aim is to find how much we can throw at a single 2U backup repository that must combine both speed and capacity. We discuss the reasons why we are doing so. For me, it is because rack units come at a premium price in various locations. This means that spending money to come up with repository building blocks that offer performance and capacity in fewer rack units ensure we spend the money where it benefits us. If the number of rack units (likely) and power (less likely) are less of a concern the economics are different.